Introduction

A GPU Job Scheduler is a tool that manages and schedules the allocation of GPUs in a cluster environment. They enable the efficient utilization of GPU resources by allocating them to the jobs that need them. Schedulers also provide a unified interface for submitting, monitoring, and controlling the execution of GPU jobs in clusters. Although schedulers can be very useful to Systems Administrators, they have drawbacks when it comes to maximizing utilization and performance.

The Top 4 Utilization Issues with GPU Job Schedulers

1. How Schedulers measure and report utilization:

Schedulers measure and report utilization in terms of VRAM assignment, meaning they're susceptible to cores and execution capabilities being over-provisioned to jobs that don’t require as many resources due to unoptimized code eating up more VRAM than it should.

2. Schedulers only suggest fixes to improve utilization:

When optimizing utilization, schedulers only give suggestions and ideas of what jobs should be reviewed, resized, and re-coded. It is then up to the user to implement these fixes on an ongoing basis.

3. Limited virtualized GPU partitioning capabilities:

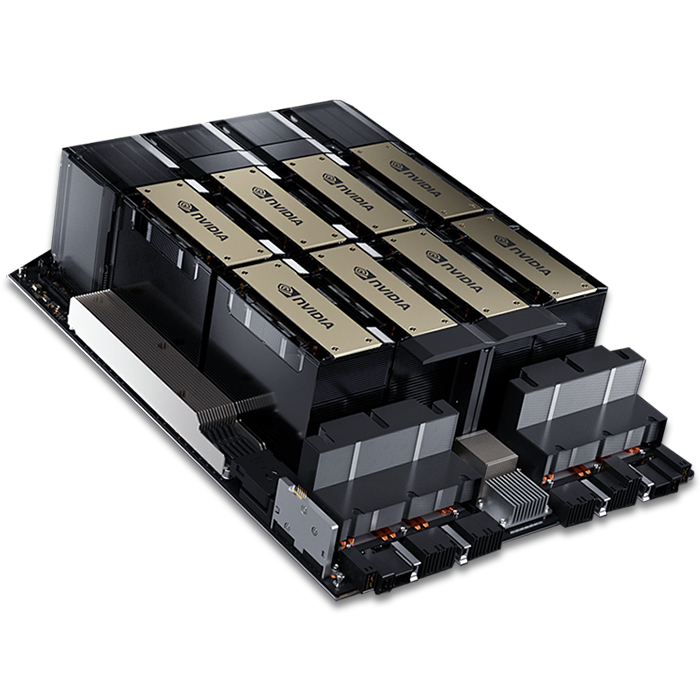

Virtualizing GPUs and partitioning them into smaller vGPUs can drastically increase utilization, thanks to multi-tenancy. Current legacy virtualization tools like MIG, used by job schedulers, max out at seven slices per GPU and can only attach a single slice to a virtual machine. MIG is also only available for NVIDIA A100 and H100 GPUs.

4. No live resizing/redistribution of VRAM:

Schedulers cannot resize VRAM at runtime for jobs that would benefit from increases or be unfazed from decreases, resulting in missed opportunities for expedited job queue completions due to under utilized VRAM.

How Arc Compute Addresses these Issues

1. How schedulers measure utilization:

Arc Compute's hypervisor, ArcHPC, can move cores and execution capabilities where they need to be during runtime, eliminating instances of under utilized or unused cores and execution capabilities. This feature, called Simultaneous Multi-Virtual GPU (SMVGPU), enables 90%-100% utilization of cores and execution capabilities, drastically expediting job completion times.

2. Schedulers only suggest fixes to improve utilization:

ArcHPC doesn’t report suggestions to resize, recode, or review jobs based on their utilization numbers as our technology optimizes during runtime automatically, fixing any issue jobs face while sharing the same underlying hardware. ArcHPC eliminates the need to report on ways to improve utilization as it automates the process without the need for human intervention.

3. Limited virtualized GPU partitioning capabilities:

Unlike MIG, which is limited to a maximum of 7 slices per GPU, ArcHPC + SMVGPU has no limit and can slice a GPU into an arbitrary number of vGPUs for multi-tenancy. It can size/resize and split without limitations. ArcHPC can also attach multiple virtualized slices from numerous GPUs into a single VM and is not limited to MIG-enables GPU models.

4. No live resizing/redistribution of VRAM:

ArcHPC will soon feature VRAM reallocation at runtime for workloads sharing GPU without needing to reboot. This feature will increase utilization and performance even more.

View comparison showing how MIG and SMVGPU differ when training multiple jobs on a single GPU

Conclusion

GPU Schedulers are complementary to ArcHPC for optimizing GPU utilization with VRAM assignment and ensuring that organizations consistently have jobs provisioned to their compute clusters. However, to address the missed opportunities in the over-provisioning of cores and execution capabilities due to poor code, the limitations of MIG, and VRAM resizing in GPUs across nodes and clusters, ArcHPC is the only solution available.

While the premier version of ArcHPC doesn't completely replace all of the functionalities of a GPU Scheduler, it sits below them in the data center tech stack, meaning that a Scheduler can be seamlessly integrated into ArcHPC.